AI Digest

MAY / JUNE 2026

~ ~ \\ // ~ ~

authored by

Noah Kenney

16 APR 2026

Copyright (C) SAFE AI Foundation

'AI Governance: A Practical Approach'

AI governance is failing at the exact moment organizations need it most. Despite rapidly increasing utilization of AI, a 2026 survey found a significant lag in AI governance, a decline of confidence in organizational response to AI-related incidents, and a gap of knowledge and training being the leading barrier to the implementation of responsible AI practices [1]. These findings highlight the need for accessible and standardized approaches to AI governance, bridging the gap from theory to implementation.

It is not for a lack of proposals. Governments, standards bodies, and consultancies have produced an abundance of AI governance frameworks, yet no single approach has achieved wide acceptance. Many of these efforts run to hundreds of pages, offering comprehensive but unwieldy guidance that overwhelms rather than guides. Practitioners are left confronting voluminous reports that provide little clarity on where to begin or how to operationalize their recommendations. What the field needs is not more exhaustive documentation but a concise, structured approach that organizations can actually implement.

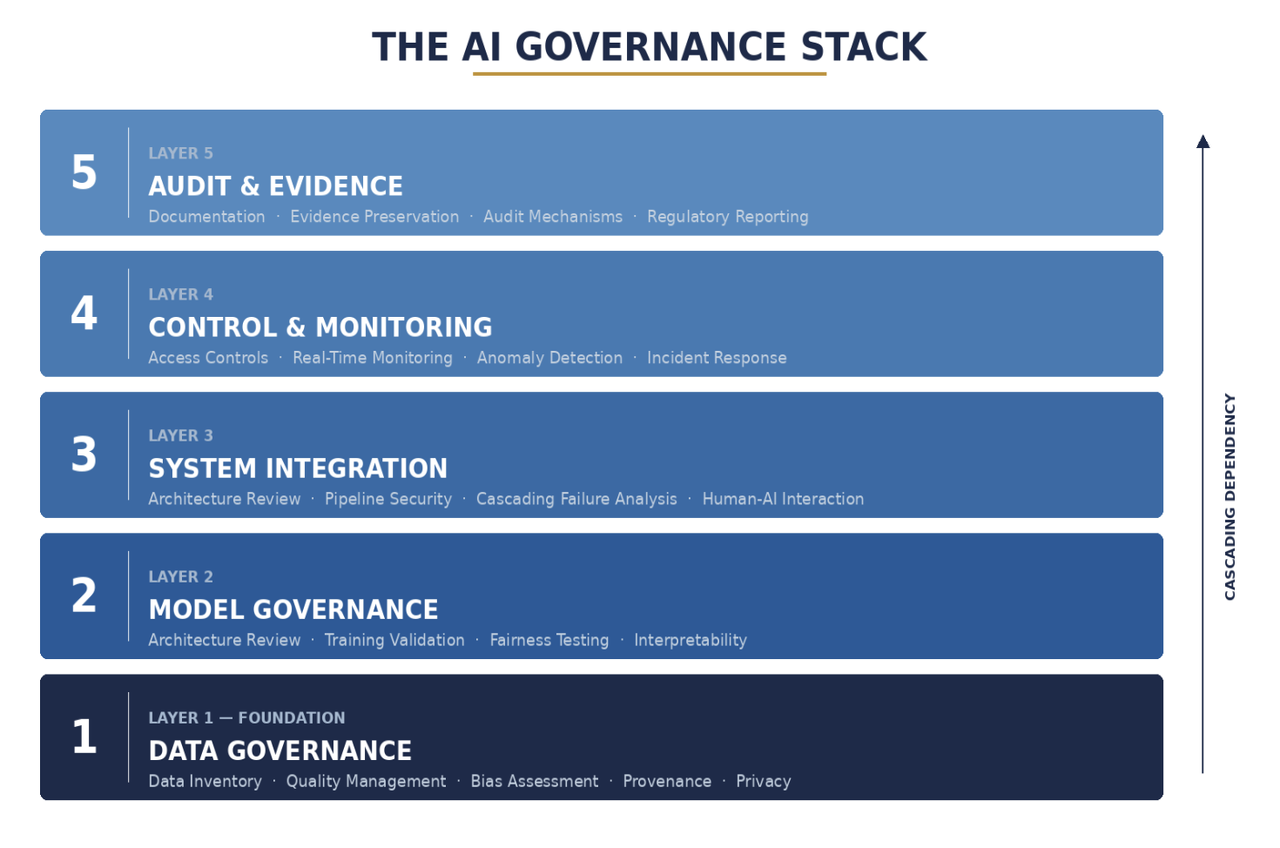

In ‘Governing Intelligence: Law, Privacy, Security, and Compliance in the Age of Artificial Intelligence’ [2], I outline a five-layer approach to AI governance, designed to provide practitioners across industries with a principles-based approach to AI governance.

Each of the five layers builds on the one below it, providing technical teams, legal and compliance functions, and executive leadership with a shared vocabulary and cleared accountability assignments. Let’s take a look at each layer.

LAYER 1 - DATA GOVERNANCE: This layer ensures the data feeding AI systems is high-quality, free of hidden bias, and privacy-compliant. Bad data produces bad outcomes; this layer prevents that at the source.

LAYER 2 - MODEL GOVERNANCE: This layer ensures AI models are reviewed for fairness, tested for robustness, and documented before deployment.

LAYER 3 - SYSTEM INTEGRATION: This layer manages how AI connects with existing business systems so failures in one area do not cascade into others.

LAYER 4 - CONTROL & MONITORING: This layer provides real-time oversight of deployed AI, including tracking performance, detecting anomalies, and enabling human override.

LAYER 5 - AUDIT & EVIDENCE: Creates the documentation and compliance evidence that regulators, auditors, and stakeholders require.

The framework assigns clear accountability: data stewards own Layer 1, ML engineering owns Layer 2, platform/infrastructure teams own Layer 3, security/operations own Layer 4, and compliance/legal teams own Layer 5. Governance has always been interdisciplinary, but the framework provides a clearer accountability chain through division of the five layers.

A critical point deserves emphasis: this framework is not a software solution. No platform, dashboard, or vendor tool, however sophisticated, can substitute for the organizational commitment required to make AI governance operational. Each layer of the governance stack must be executed by people through defined processes, supported by institutional accountability structures that exist independent of any particular technology. The question practitioners most frequently ask is not what the layers are, but how organizations actually implement them. The answer lies in governance operating models, not software.

Implementation begins with governance roles, not technology procurement. Data stewards at Layer 1 must establish and enforce data quality standards, conduct bias reviews of training datasets, and maintain data lineage documentation. This form of work requires domain expertise and institutional knowledge no automated tool can replicate.

At Layer 2, model review boards must conduct fairness assessments, stress-test models against adversarial inputs, and produce model cards documenting intended use, known limitations, and performance benchmarks. These are judgment-intensive activities.

Enterprise architects at Layer 3 must map AI system dependencies, define failure boundaries, and establish rollback procedures, which are all decisions that require understanding the business context in which AI operates.

Security and operations personnel at Layer 4 must define monitoring thresholds, establish escalation protocols, and maintain the authority and capability to intervene when systems behave unexpectedly.

And compliance and legal teams at Layer 5 must design audit trails, interpret evolving regulatory requirements, and translate them into actionable controls.

The common thread across all five layers is that governance is executed through cross-functional collaboration, standing committees, recurring reviews, and documented decision-making processes. Organizations that succeed at AI governance typically establish an AI governance committee with representation from each layer’s accountable function, define clear escalation paths for issues that cross layer boundaries, and invest in training programs that build governance literacy across the organization and not just within compliance teams. Technology supports governance by providing tooling for monitoring, documentation, and workflow automation, but the decisions about what is acceptable, what risk is tolerable, and when human intervention is required are inherently human judgments. Organizations that treat AI governance as a software procurement exercise will find themselves with dashboards but no accountability and accountability is what governance ultimately requires.

This exposes the central failure of most AI governance programs: they cannot prevent behavior at the moment it occurs.

In a companion paper to the ‘Governing Intelligence’ textbook [3], I argued that as AI systems shift from advisory tools to autonomous agents, runtime enforcement (the ability to constrain system behavior at the moment of execution) is not a missing sixth layer of the AI Governance Stack, but a cross-cutting capability that must be embedded within all five existing layers (data, model, system, monitoring, audit). This is because the conditions that determine whether an action is admissible span the entire stack. The paper proposes six design principles for distributing enforcement across the governance lifecycle, emphasizing continuous authorization, contemporaneous evidence, and graceful degradation.

The technical methodologies used in AI governance will continue to evolve, as will the regulatory and operational landscape. What should remain constant, however, is the principle that governance must be structural rather than reactive. Most enterprise AI governance programs are structurally incapable of preventing failure because they rely on post hoc controls. The five-layer AI Governance Stack addresses this by making governance an engineering discipline as much as a legal one.

AI adoption is no longer limited to data science teams working in sandboxed environments. Foundation models are being integrated into customer-facing applications, supply chain decision engines, and internal workflow automation at a pace that outstrips the capacity of traditional risk management functions.

When a single large language model can be embedded across dozens of business processes simultaneously, the scale of governance failure grows proportionally, whether a data quality issue at Layer 1 or a monitoring gap at Layer 4. The layered approach does not eliminate this risk, but it makes the risk legible by telling an organization exactly where to look when something goes wrong and who is responsible for the remediation.

Consider a financial services firm deploying an AI model to automate small business loan approvals. A subtle bias in historical training data (Layer 1) leads the model (Layer 2) to systematically under-score applicants from certain ZIP codes. The model is integrated directly into the approval workflow (Layer 3), where decisions are executed without meaningful human review. Because monitoring is limited to aggregate performance metrics rather than segmented outcomes (Layer 4), the bias goes undetected in production. The issue is only discovered months later during a compliance audit (Layer 5), triggering regulatory scrutiny, reputational damage, and costly remediation.

The failure is not isolated to a single layer; it is the result of breakdowns across the entire governance stack. Critically, at no point in the execution path was there a mechanism to constrain or override the model’s behavior in real time. This is precisely the category of failure that requires embedded, cross-layer enforcement rather than post hoc detection.

Additionally, the framework is designed to be regulation-agnostic. Whether an organization is operating under the EU AI Act, sector-specific guidance from U.S. federal agencies, or emerging frameworks in jurisdictions across Asia and Latin America, the five layers map to the core obligations that regulators consistently require: data provenance, model transparency, system reliability, ongoing monitoring, and auditable evidence of compliance.

By anchoring governance to these enduring principles rather than to the text of any single statute, organizations can adapt to regulatory change without rebuilding their governance programs from scratch.

Ultimately, the goal of the five-layer approach is not to slow AI adoption but to make it sustainable. Organizations that invest in governance infrastructure now (assigning accountability, instrumenting their systems for oversight, and building the evidentiary record that regulators and stakeholders will demand) position themselves to deploy AI with the confidence and velocity that ungoverned systems cannot sustain over time.

~~~ end ~~~

REFEERENCES

Free book on Governing Intelligence. See: https://digital520.com/Governing_Intelligence_Publication_Version_NMK.pdf

Information about RUNTIME Governance. See: https://digital520.com/platform/learn/assets/Runtime_Enforcement_AI_Governance_Stack.pdf

AI Governance & Standards. See: https://safeaifoundation.com/ai-governance and https://safeaifoundation.com/ai-standards

Disclaimer: The information in this digest is provided “as it is”, by the SAFE AI FOUNDATION, USA. The use of the information provided here is subject to the user’s own risk, accountability, and responsibility. The SAFE AI FOUNDATION and the author are not responsible for the use of the information by the user or reader. The opinions expressed in this article are solely that of the author, not the SAFE AI Foundation. All copyrights related to this article are reserved by the author. Please reference this article if you wish to cite it elsewhere.

Note: The SAFE AI Foundation is a non-profit organization registered in the State of California and it welcomes inputs and feedback from readers and the public. If you have things to add concerning AI Governance and would like to volunteer or donate, please email us at: contact@safeaifoundation.com